- Home

- Services

- About

- News

- Contact

- In christ alone lyrics passion 2013

- Husharu movie cast

- Pca column

- Sony acid pro 7 dubstep

- Naruto episodes viz

- What are you r favorite uad plugins

- Ulead photo express 6 activation code crack

- How to use devonthink to go with mac

- Age of empires 2 game free download full version for pc

- Malwarebyte antimalware premium

- Pk 380

- Kids song lyrics youtube

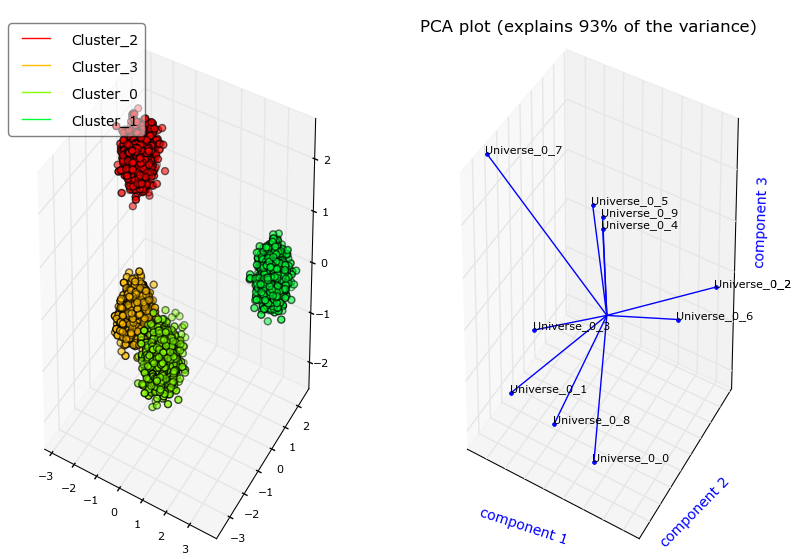

The Principle Component breakdown by features that you have there basically tells you the "direction" each principle component points to in terms of the direction of the features. So on the PC1 the feature named e is the most important and on PC2 the d. Most_important_names = ] for i in range(n_pcs)]ĭic = Most_important = ).argmax() for i in range(n_pcs)] # get the index of the most important feature on EACH component Model = PCA(n_components=2).fit(train_features) TO get the most important features on the PCs with names and save them into a pandas dataframe use this: from composition import PCA The important features are the ones that influence more the components and thus, have a large absolute value on the component.

Plt.scatter(xs ,ys, c = y) #without scaling #In general it is a good idea to scale the data So the higher the value in absolute value, the higher the influence on the principal component.Īfter performing the PCA analysis, people usually plot the known 'biplot' to see the transformed features in the N dimensions (2 in our case) and the original variables (features).Įxample using iris data: import numpy as npįrom sklearn.preprocessing import StandardScaler

Pca column Pc#

This value tells us 'how much' the feature influences the PC (in our case the PC1). In your case, the value -0.56 for Feature E is the score of this feature on the PC1. Summary in an article: Python compact guide:

Pca column how to#

PART2: I explain how to check the importance of the features and how to save them into a pandas dataframe using the feature names. PART1: I explain how to check the importance of the features and how to plot a biplot. Terminology: First of all, the results of a PCA are usually discussed in terms of component scores, sometimes called factor scores (the transformed variable values corresponding to a particular data point), and loadings (the weight by which each standardized original variable should be multiplied to get the component score).

- Home

- Services

- About

- News

- Contact

- In christ alone lyrics passion 2013

- Husharu movie cast

- Pca column

- Sony acid pro 7 dubstep

- Naruto episodes viz

- What are you r favorite uad plugins

- Ulead photo express 6 activation code crack

- How to use devonthink to go with mac

- Age of empires 2 game free download full version for pc

- Malwarebyte antimalware premium

- Pk 380

- Kids song lyrics youtube